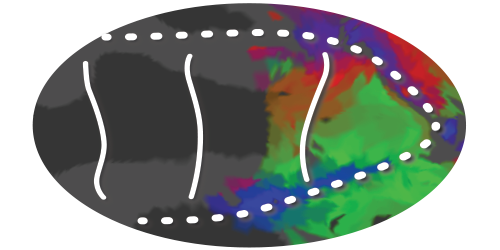

With fMRI, we measure a signal related to the activity of thousands of neurons in each voxel. Because neurons with similar receptive fields are close together on the retinotopic map, each population of neurons in a single fMRI voxel will have similar receptive fields. This means that it is possible to chart, for each voxel, its population receptive field, or pRF, i.e. the region of visual space that the voxel’s neural tissue analyses: its window onto the world. These mappings of visual preferences in the brain are unique to the specific participant, and give us detailed knowledge about how the visual system encodes visual information. Our lab is interested in finding how this encoding of visual information changes as a function of cognitive factors such as attention and reinforcement.

Recent studies do exactly this - using this novel encoding-model approach emphasize the flexibility of information encoding in the retinotopic maps, changing due to things such as attention, etc. Thus, they bring us closer to a low-level understanding of the neuronal underpinnings of cognitive phenomena.

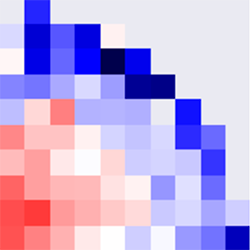

We have created a package that performs this type of pRF estimation, and are in the process of validating it. Once this is finished, we will open it up for all to use.